Researchers have achieved a new record for qubit reliability in superconducting quantum computing systems – overcoming an important barrier to quantum computing.

In a study published on February 27 in the journal Nature Communicationscientists from IBM, RWTH Aachen University in Germany and Los Angeles-based startup Quantum Elements quantum error correction and compression, which is the biggest obstacle to building more powerful machines the fastest supercomputers.

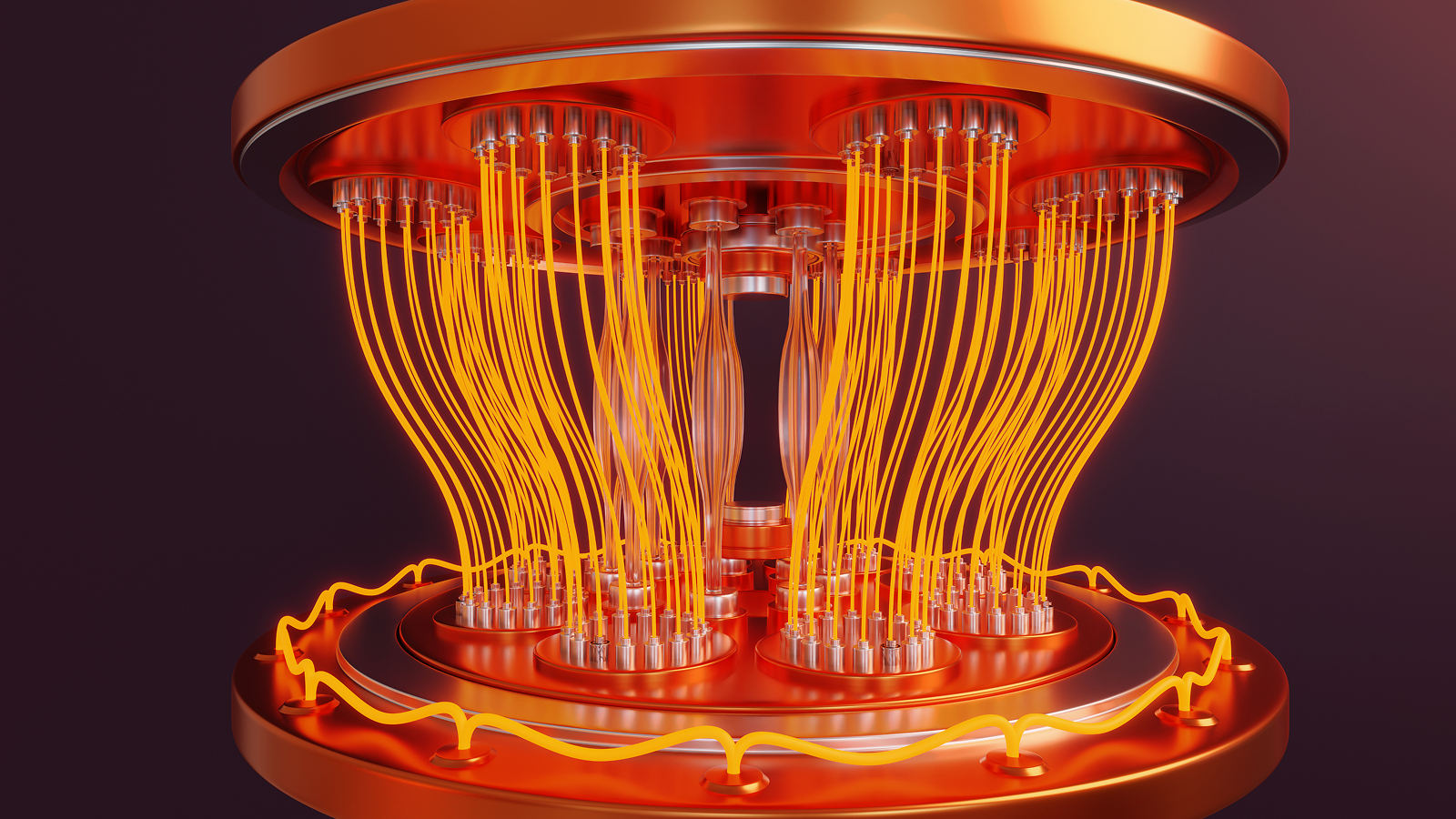

Superconducting quantum computers use quantum bits (qubits), the equivalent of a computer particle, to perform calculations. The systems the researchers used – IBM’s 127-qubit processors in Kyiv and Marrakesh – use a combination of “physical qubits” and “logical qubits,” groups of entangled qubits that store the same information in different locations, if the physical qubit that stores that information fails to read.

Physical qubits are embedded in a quantum computer as a complex, geometrically precise circuit made of large metal. It’s not cool to be around a full notethese metals lose their electrical resistance, allowing quantum information to flow without losing energy.

But these qubits can experience small disturbances, including vibrations, local noise and other environmental factors, which make them inherently brittle. To compensate for this shortcoming, scientists connect many qubits together to create a logical qubit.

When calculations are performed across the logical qubits, the physical qubits act as error canceling particles. But the inherent problem with this setup, scientists said in a new study, is that it is weak against “reasonable errors.”

Logical errors occur when many physical qubits within a logical qubit succumb to noise. In fact, when one physical qubit fails, the others act as protection against its false signal. But when many qubits fail, the system treats the error they produce as a valid signal – and the quantum is destroyed.

Suppressing mistakes before they happen

The 127-qubit IBM system the researchers used has a specific type of noise called “ZZ crosstalk,” which is caused by the specific arrangement of its physical qubits.

The Quantum Elements team developed a hybrid approach to deal with this particular type of noise. It involves suppressing crosstalk errors before they occur, thus reducing the overall number of potential undetectable errors. They combined this method with existing debugging tools to create a new hybrid protocol.

As a result, researchers have achieved the highest numbers of fidelity – with the lowest noise – with superconducting qubits for the longest recorded time.

According to the study, the scientists had previously achieved a high coding reliability of 79.5% in one test and 93.7% in another, which dropped to around 30% after about 27 microseconds.

The peak-fidelity metric indicates the highest fidelity achieved in a quantum system, which occurs directly after the creation of a logical qubit. The longer a quantum computer takes to be at or near peak reliability, the better it can run quantum algorithms.

The team broke those previous records, using a new technique called normalizer dynamical decoupling (NDD). They achieved 98.05% peak encoding fidelity, which maintained 84.87% fidelity after 55 microseconds.

A common method of dynamical decoupling, a common technique for error correction, involves using microwave pulses to force physical qubits to switch back and forth. This controls the qubits and generally measures the background noise, but it does so one physical qubit at a time.

But there is a problem with scaling up this method: the more physical qubits in the system, the more pulses you need to suppress the noise. Ultimately, this causes more noise and increases errors in the system, defeating the purpose, the authors of the study explained.

However, the scientists applied this paradigm to the logical layer of the qubit, rather than strictly implementing it in the hardware layer. To do this, they had to devise a way to adjust its frequency, using a mathematical “normalizer” based on the quantum code that works in the machine itself. This caused it to clash with the rhythms associated with the machine code.

The result, the normalizer dynamical decoupling, has produced the highest numbers on a superconducting quantum computer to date. If this level of high fidelity can be maintained, we can expect quantum computers to be more useful.

The number of quantum gates – or the operation of a single quantum – a quantum system can operate depends on how long it can maintain integrity. It usually takes approx 10 to 12 nanoseconds that only one gate is installed. This means that approximately 4,500 to 5,500 consecutive operations can occur in 55 microseconds before the data is corrupted, as shown in this study.

The main goal of a quantum computer is to create a device that can operate with high fidelity long enough to perform important operations, such as running. Shor’s Algorithm blatant cheating. Must be estimate that advanced tasks like this could one day take weeks or months for a capable system to complete – which isn’t bad when you consider it can take an old computer. hundreds of billions of years to achieve the same result.

A record breaking 55 microseconds of high fidelity performance appears to be it is far from getting helpbut it represents a great leap forward from previous efforts.

Vezvaee, A., Tripathi, V., Morford-Oberst, M., Butt, F., Kasatkin, V., & Lidar, DA (2026). A high-fidelity display that is logically installed using transmons. Nature Communication. https://doi.org/10.1038/s41467-026-70011-3

Think you know everything about computers? Test your knowledge with us computer question!

#IBM #quantum #processor #achieves #reliable #calculations #longest #time #record